The Problem with Modern Search

Everyone is obsessed with one-shot answers, direct-response UX, and ever larger embedding spaces. Search is not just matching words or computing similarity. It is about understanding what makes a result truly relevant to a human being.

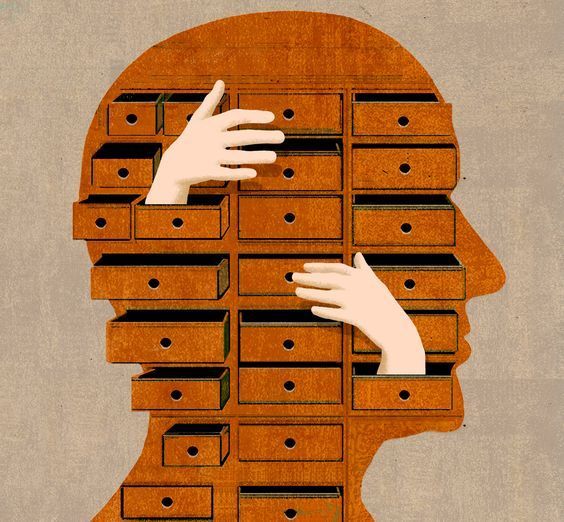

When you search for information, your brain does not only match keywords. It evaluates relevance on multiple axes at once. Sometimes a document matters because it directly answers the question. Sometimes it matters because it is better than the alternatives. Sometimes it matters because of how it fits into the entire ranked set. Those are different ranking problems, and collapsing them too early flattens the search experience.

SRSWTI Hilbert Search

What if a search algorithm could reason about relevance more like we do? That is the core idea behind SRSWTI Hilbert Search. Instead of betting on one training objective, it learns relevance from three angles: pointwise, pairwise, and listwise.

1 # Different ways to learn relevance

2 query = "machine learning artificial intelligence"

3 documents = [

4 "Machine learning is transforming how we approach AI",

5 "Python programming language is known for simplicity",

6 "Deep neural networks require computational resources"

7 ]

8

9 # Pointwise: How relevant is each document by itself?

10 relevance = [0.95, 0.3, 0.8]

11

12 # Pairwise: Which document is better?

13 # Doc1 > Doc3 > Doc2

14

15 # Listwise: How do they all rank together?

16 # Understanding the entire result setThe system learns from human relevance judgments. For a query about machine learning, it should rank AI papers above general programming posts. For a query about traditional food preparation, it should understand that authentic technique matters more than a casual mention of food. The point is not to maximize similarity in the abstract; the point is to learn how humans decide that something is worth seeing first.

The Three Dimensions of Relevance Learning

Before we rank, we need features that describe the relationship between a query and a document. For each query-document pair, I compute a compact feature vector that mixes classical retrieval and semantic information.

$$ f(q, d) = \left[f_{\mathrm{tfidf}}, f_{\mathrm{semantic}}, f_{\mathrm{length}}, f_{\mathrm{rel\_length}}\right] $$

f_tfidf: TF-IDF similarity between the query and the documentf_semantic: semantic similarity from sentence embeddingsf_length: raw document lengthf_rel_length: relative length, computed as $\mathrm{len}(d) / \mathrm{mean}(\mathrm{len}(D))$

1 # Core feature extraction

2 tfidf_scores = (query_tfidf @ tfidf_matrix.T).toarray()[0]

3 semantic_scores = np.inner(query_embedding, doc_embeddings)

4 doc_lengths = [len(doc.split()) for doc in documents]

5 relative_lengths = doc_lengths / np.mean(doc_lengths)That feature basis gives the model enough signal to reason about lexical alignment, meaning, and document shape without pretending that one representation is sufficient for every domain.

The Trinity of Ranking Approaches

1. Pointwise Ranking: Absolute Relevance

The pointwise ranker predicts an absolute relevance score for each document independently. For a feature vector $x$, it learns:

$$ r(x) = \sigma\left(W_2 \operatorname{ReLU}(W_1x + b_1) + b_2\right) $$

Using a sigmoid keeps scores in the $[0, 1]$ range. Training uses a simple mean-squared error objective:

$$ L_{\mathrm{point}} = \frac{1}{n}\sum_{i=1}^{n}\left(r(x_i) - y_i\right)^2 $$

Pointwise ranking is useful because it gives a calibrated notion of document quality, not just ordering.

2. Pairwise Ranking: Learning Preferences

The pairwise ranker learns from document comparisons. Instead of asking “how relevant is this document in isolation?”, it asks “which of these two documents should rank higher?” For documents $d_i$ and $d_j$, the probability that $d_i$ beats $d_j$ is:

$$ P(d_i > d_j) = \sigma\left(r(x_i) - r(x_j)\right) $$

The learning objective follows the RankNet formulation:

$$ L_{\mathrm{pair}} = -\sum_{(i,j)} \left[\bar{P}_{ij}\log P_{ij} + (1-\bar{P}_{ij})\log(1-P_{ij})\right] $$

Here, $\bar{P}_{ij}=1$ when document $i$ should outrank document $j$, and $0$ otherwise. Pairwise training is great at sharpening preference boundaries between close candidates.

3. Listwise Ranking: Global Context

Listwise ranking treats the entire result set as the object of learning. Instead of optimizing documents or pairs independently, it models how the whole page should look.

$$ P_s(d_i \mid q) = \frac{\exp(s_i)}{\sum_{j=1}^{n_q}\exp(s_j)} $$

$$ P_y(d_i \mid q) = \frac{\exp(y_i)}{\sum_{j=1}^{n_q}\exp(y_j)} $$

$$ L_{\mathrm{list}} = -\sum_{i=1}^{n_q} P_y(d_i \mid q)\log P_s(d_i \mid q) $$

This is the missing line from the rough notes: the ground-truth distribution is derived from the relevance labels themselves. That matters because listwise learning encodes not just who wins, but how the entire ranked surface should allocate attention.

Neural Network Architecture

Each ranking approach uses the same basic scoring backbone. The difference is not the MLP itself; the difference is the loss and the way we interpret the scores.

1 self.model = nn.Sequential(

2 nn.Linear(input_dim, 64),

3 nn.ReLU(),

4 nn.Dropout(0.2),

5 nn.Linear(64, 32),

6 nn.ReLU(),

7 nn.Linear(32, 1)

8 )- Pointwise: apply a sigmoid and learn absolute relevance.

- Pairwise: compare score differences across document pairs.

- Listwise: softmax the scores across the whole candidate set.

Training Dynamics

For a training set with queries $Q$ and documents $D$, the loop looks like this:

- Extract features for every query-document pair.

- For each query $q \in Q$, train pointwise on individual $(d, y)$ pairs.

- Generate document pairs for pairwise ranking.

- Train listwise on the entire result list for that query.

The multi-view setup matters because each objective captures a different aspect of relevance. One objective is not “truer” than the others. They are different lenses on the same retrieval problem.

1 def rank_documents(self, query: str, documents: List[str]) -> List[Tuple[int, float]]:

2 features = self.feature_extractor.extract_features(query, documents)

3 scores = self.model(torch.FloatTensor(features)).detach().numpy()

4 return sorted(enumerate(scores), key=lambda x: x[1], reverse=True)1 # Real example from our test set

2 query = "machine learning artificial intelligence"

3 top_results = ranker.rank_documents(query, documents)

4 # Returns: [

5 # "Machine learning is transforming how we approach AI..." (score: 0.95),

6 # "Deep neural networks require computational..." (score: 0.80),

7 # "Python programming language..." (score: 0.30)

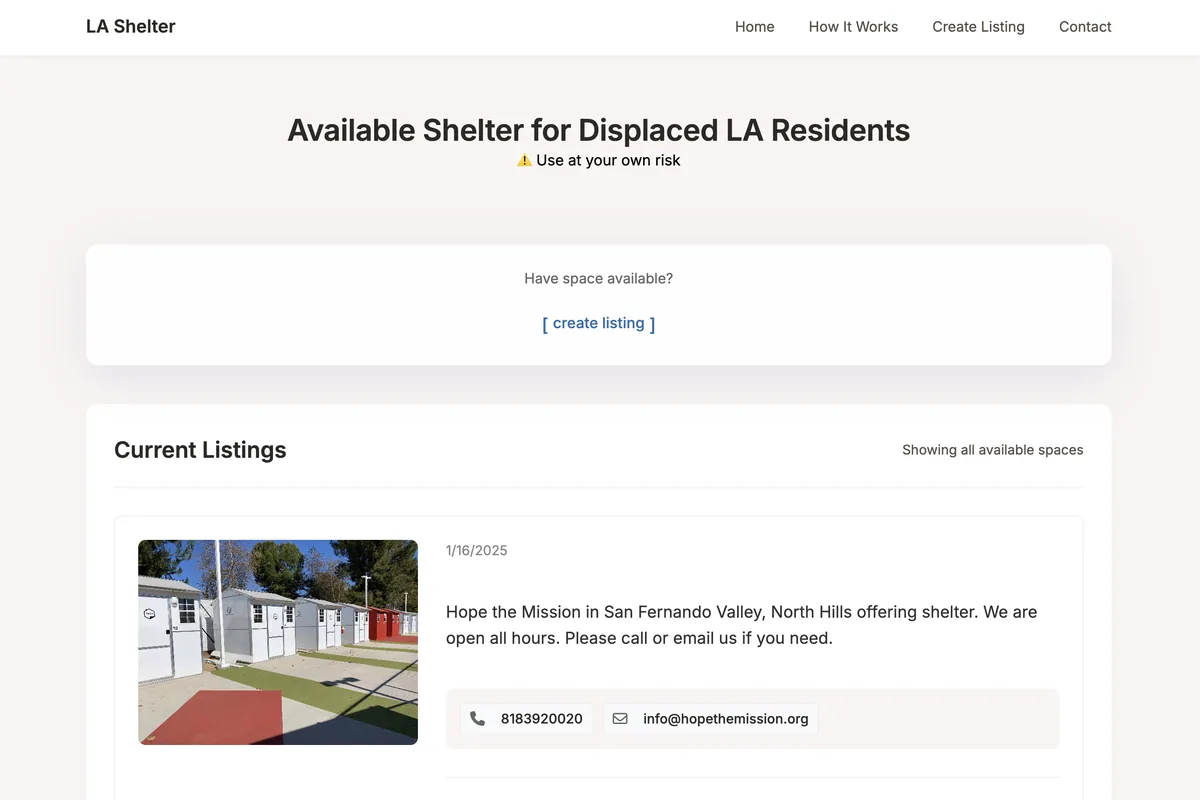

8 # ]Real-World Impact & Crisis Evaluation (RWICS)

Offline metrics are necessary, but the real test is operational. In crisis retrieval, weak ranking is not an abstract quality issue; it is a decision-quality issue. If someone is searching for evacuation routes, emergency shelter availability, triage guidance, or medication interactions, pairwise and listwise mistakes become very expensive very quickly.

Pointwise mistakes are sometimes recoverable because a good result may still appear somewhere. Pairwise mistakes reorder close candidates and quietly degrade trust. Listwise mistakes are the worst because they distort the entire screen, pushing the wrong cluster of documents to the top and starving the right cluster of visibility.

That is why I care about all three objectives together. Relevance is not just an academic metric; it is a crisis interface problem.

SRSWTI Binary Independence: Classical Retrieval Still Matters

Modern search discourse can get weirdly dismissive about classical IR. BM25 still matters. Documents are not just points in embedding space; they are discrete probability events with term frequency structure, scarcity patterns, and local context windows. Ignoring that leaves performance on the table.

1. BM25: The Classical Foundation

$$ \operatorname{BM25}(D, Q) = \sum_{i=1}^{n}\operatorname{IDF}(q_i) \cdot \frac{f(q_i, D)(k_1 + 1)} {f(q_i, D) + k_1\left(1 - b + b\frac{|D|}{\operatorname{avgdl}}\right)} $$

$$ \operatorname{IDF}(q_i) = \log\left(\frac{N - n(q_i) + 0.5}{n(q_i) + 0.5}\right) $$

BM25 gives us rarity, saturation control, and document-length normalization. It is not glamorous, but it is one of the few ranking functions that keeps earning its place.

2. Semantic Similarity: Beyond Words

1 query_embedding = self.embedder.encode([query])[0]

2 doc_embeddings = self.embedder.encode(documents)

3 semantic_scores = cosine_similarity([query_embedding], doc_embeddings)[0]Embeddings bring intent alignment. They help the system understand that “neural ranking” and “learning to rank” may belong in the same neighborhood even when the surface forms differ.

3. Proximity Magic: The Context Dance

Words do not just matter individually; their spacing matters. A search system should care when the key terms show up together in a tight, coherent span instead of being scattered across an irrelevant document.

$$ S_{\mathrm{proximity}} = \frac{1}{1 + \operatorname{avg}(\min(\operatorname{distances}))} $$

$$ S_{\mathrm{final}} = w_{\mathrm{bm25}}S_{\mathrm{bm25}} + w_{\mathrm{semantic}}S_{\mathrm{semantic}} + w_{\mathrm{proximity}}S_{\mathrm{proximity}} $$

1 query = "machine learning techniques"

2 results = engine.hybrid_search(

3 query,

4 documents,

5 weights={'bm25': 0.4, 'semantic': 0.4, 'proximity': 0.2}

6 )That hybrid matters because it lets the engine reward three things at once:

- statistical significance from BM25

- semantic intent alignment from embeddings

- contextual tightness from proximity

SRSWTI Advanced PageRank: Rethinking Document Relationships

Traditional PageRank assumes explicit links between documents. A web page points to another page, and the graph already exists. In a document collection, those edges are mostly invisible. So we construct them from semantic affinity.

1 similarity_matrix = cosine_similarity(self.doc_embeddings)For any two documents $d_i$ and $d_j$, we create an edge when their similarity crosses a threshold:

$$ A_{ij} = \begin{cases} \operatorname{sim}(d_i, d_j), & \text{if } \operatorname{sim}(d_i, d_j) > \tau \\ 0, & \text{otherwise} \end{cases} $$

The PageRank score becomes:

$$ PR(d_i) = (1 - \alpha)\sum_{d_j \in M(i)} \frac{PR(d_j)}{|C(d_j)|} + \alpha E(d_i) $$

At query time, we mix graph centrality with direct query similarity:

$$ S(d \mid q) = \lambda PR(d) + (1 - \lambda)\operatorname{sim}(q, d) $$

1 G = nx.DiGraph()

2 for i in range(len(documents)):

3 for j in range(len(documents)):

4 if similarity_matrix[i][j] > threshold:

5 G.add_edge(i, j, weight=similarity_matrix[i][j])1 pagerank_scores = nx.pagerank(G, alpha=0.85)1 final_scores = alpha * pagerank_scores + (1 - alpha) * similarities1 clusters = {

2 idx: list(component)

3 for idx, component in enumerate(nx.connected_components(G))

4 }This gives us something useful: documents can rank highly not only because they match the query directly, but because they are central to a semantic discourse community around that query. A strong document about neural networks in autonomous driving can surface for an electric-vehicles query because the semantic graph knows those neighborhoods overlap.

And because the query-similarity term stays in the scoring function, high-authority but off-topic documents do not get a free pass. That combination keeps the graph useful without letting it drift.

That is why I call it Hilbert Search: not because relevance lives in only three literal dimensions, but because multiple axes of evidence can be projected into a shared geometric space and reasoned about holistically.

MMF — Multi Modal Fusion

Now for the fun part. Google’s MUM is the clearest proof that modern search is no longer a pure text-ranking problem. The hard queries are multimodal, multilingual, and multi-step. Even if we cannot replicate Google-scale infrastructure, we can still build a focused version of the same idea.

Think about a query like: “I hiked Mt. Adams and now want to hike Mt. Fuji next fall. What should I do differently to prepare?” The system has to reason about elevation, seasonal weather, fitness requirements, cultural context, and gear. That is not one task. It is a bundle of tasks routed through one search interface.

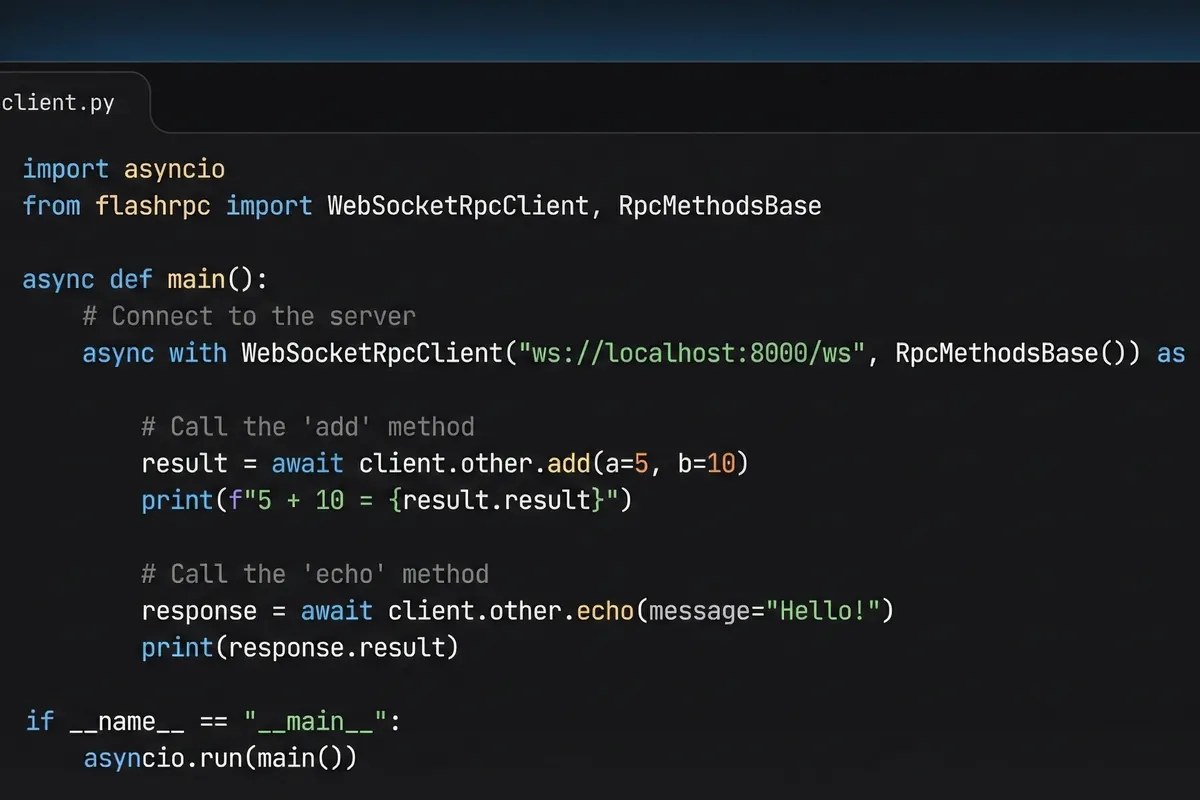

Phase 1: Text Understanding and Task Routing

1 class SRSWTIMumCore:

2 def __init__(self):

3 self.text_encoder = AutoModel.from_pretrained("xlm-roberta-large")

4 self.task_router = TaskRouter()

5 self.search_head = SearchTransformer()- multi-language text encoding

- query intent understanding

- task identification and routing

Phase 2: Adding Eyes to the Brain

1 class MultimodalFusion:

2 def __init__(self):

3 self.vision_encoder = CLIPModel.from_pretrained("openai/clip-vit-base-patch32")

4 self.fusion_layer = CrossModalTransformer()

5

6 def fuse_features(self, text_features, image_features):

7 return self.fusion_layer(text_features, image_features)- image understanding with CLIP

- cross-modal feature fusion

- joint text-image representations

- multimodal ranking

Phase 3: The Multitask Brain

1 class TaskHeads:

2 def __init__(self):

3 self.search_head = TransformerDecoder()

4 self.qa_head = QuestionAnswering()

5 self.summary_head = Summarization()- task-specific heads

- cross-task knowledge sharing

- dynamic routing based on query shape

Phase 4: Integration

1 results = mum_engine.process_query(

2 text="Compare hiking Mt. Adams vs Mt. Fuji in fall",

3 images=[hiking_gear_img, mt_fuji_img],

4 tasks=['comparison', 'recommendations']

5 )This is the larger ambition: a search system that does not just find matching documents, but can fuse modalities, route subtasks, and compose results into a higher-quality answer surface. That is what makes MUM-style systems special. They hold multiple constraints in the same inference loop.

I want SRSWTI to move in that direction without pretending we have infinite compute. The early versions can still be CPU-realistic, modular, and ensemble-friendly. But the destination is clear: more holistic search, less brittle retrieval theater.